Regional Fintech Institution, MENA·2025

AI-Powered Legal Knowledge Assistant for a Regional Fintech Institution

40–60%

Reduction in repetitive legal queries

From days to minutes

Time to access legal information

+25–35%

Employee productivity improvement

90%+

Policy search accuracy

Significant decrease

Reduction in legal team workload

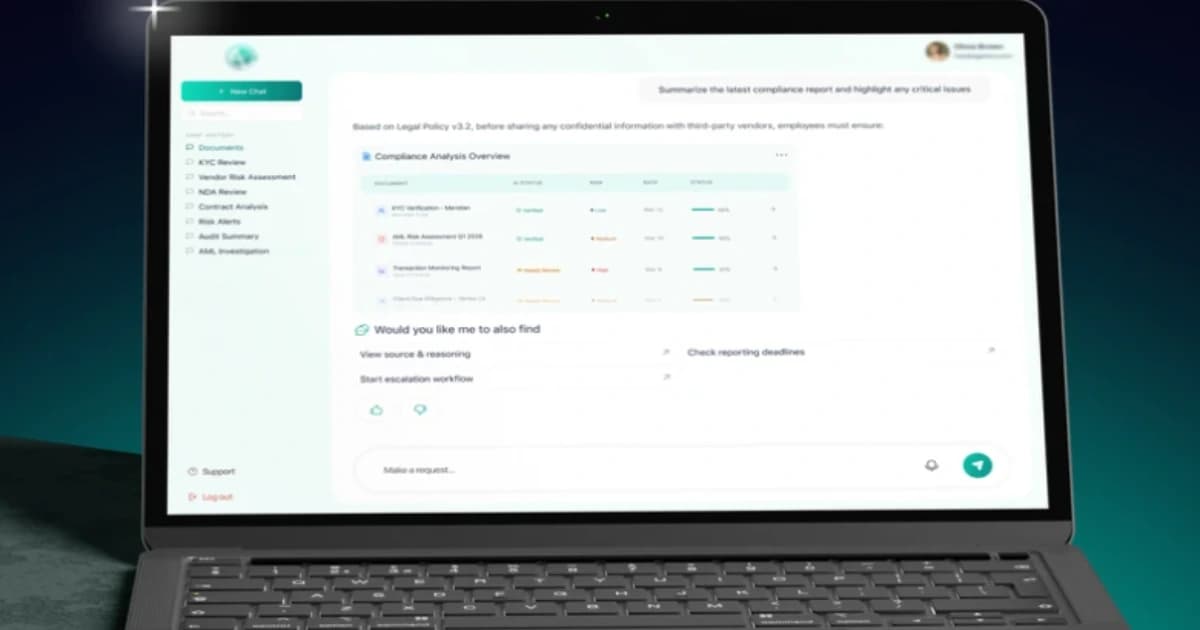

A large fintech organization in the MENA region partnered with Lumitech to improve access to legal and compliance knowledge across the company. Thousands of employees relied on internal policies and procedures for daily decision-making, but existing systems made it difficult to find accurate, up-to-date information quickly. Lumitech developed an AI-powered legal knowledge assistant that enables employees to ask questions in natural language and receive accurate, policy-based answers. The assistant acts as a single entry point into legal and compliance knowledge, reducing dependency on manual consultations and improving operational efficiency. The solution combines secure LLM orchestration, Retrieval-Augmented Generation (RAG), and role-based access control to ensure that responses are accurate, explainable, and compliant with internal and external regulations.

Challenge

The organization faced a common but critical problem for regulated fintech companies: High volume of repetitive legal and compliance questions Strong dependency on legal team consultations Difficulty finding relevant policies in fragmented systems Risk of outdated or misinterpreted information Ineffective keyword-based search At the same time, strict constraints made implementation complex: No use of public AI models due to data sensitivity Requirement for strict role-based access control Continuous updates of legal documents Need for traceable, explainable answers linked to approved sources The company needed a solution that balanced accuracy, security, and usability in a regulated environment.

Solution

Lumitech approached the project from a business and legal workflow perspective first, not just technology. Key solution components: AI assistant with natural language interface for employees Retrieval-Augmented Generation (RAG) grounded in internal documents Secure LLM orchestration within the company’s infrastructure Role-based access control for data security Separation of: policy explanations process guidance (what to do next) Intelligent document indexing (policies, SOPs, regulations) Integration into existing internal systems and portals The project started as a pilot, allowing validation of answer quality and safe scaling across departments.

Results

The AI assistant delivered clear operational improvements even at the pilot stage: Reduced volume of repetitive legal queries Faster access to compliance information (from days to minutes) Improved decision-making speed across teams Lower operational and regulatory risk through consistent, policy-based answers Increased transparency with answers linked to official documents Created a scalable AI knowledge platform extendable to other departments The solution improved both efficiency and reliability of legal knowledge access without replacing the legal team.

Regulatory & Security Constraints

The solution was designed for a highly regulated fintech environment, where data security and compliance were critical from day one. All AI processing was implemented within a secure infrastructure, ensuring that no sensitive legal or internal data was exposed to public AI models. Access to information is strictly controlled through role-based permissions, so employees only see content relevant to their responsibilities. Additionally, every response generated by the assistant is fully traceable and linked to approved internal documents, ensuring transparency, auditability, and compliance with regulatory requirements.

AI Architecture & Approach

Instead of relying on generic AI responses, the system uses a Retrieval-Augmented Generation (RAG) approach to ground answers in verified internal documents such as policies, procedures, and regulatory guidelines. The architecture separates informational responses (what the policy says) from process guidance (what actions should be taken), reducing the risk of misinterpretation and ensuring responsible AI usage. This approach allows the assistant to deliver accurate, context-aware answers while maintaining strict control over data and decision boundaries.

Business Impact

The assistant transformed how legal knowledge is accessed across the organization. Instead of waiting for manual consultations, employees can now receive reliable answers within minutes. This significantly reduced the workload on legal teams, allowing them to focus on high-value, complex cases instead of repetitive queries. At the same time, business units gained faster decision-making capabilities, improved operational efficiency, and reduced regulatory risk through consistent access to up-to-date, policy-based information.